How AI is Used in USA Schools: From ChatGPT Classrooms to Federal Policy in 2025

Understanding how AI is used in USA schools has become critical as artificial intelligence transforms American education at unprecedented speed. From elementary classrooms to high school robotics labs, how AI is used in USA schools now encompasses everything from personalized tutoring to administrative automation, marking a fundamental shift in teaching and learning across the nation.

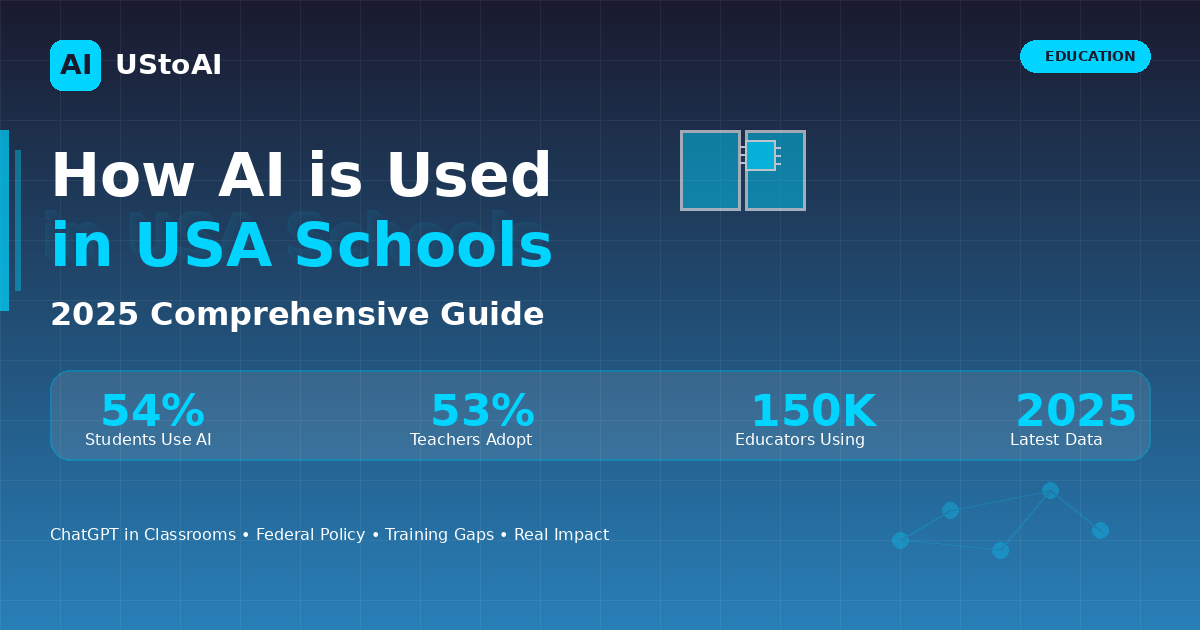

The landscape of American education shifted dramatically in late 2025 when the U.S. Department of Education issued comprehensive guidance on artificial intelligence use in schools, followed by OpenAI’s announcement of ChatGPT for Teachers—a free platform for 150,000 educators nationwide. These developments crystallize a transformation that’s been building since ChatGPT’s debut in late 2022, but the pace of change has accelerated beyond what most education experts anticipated.

The Current State: Adoption Outpaces Training

Recent data from the RAND Corporation reveals a striking reality: 54% of American students and 53% of English, math, and science teachers now use AI for schoolwork—increases of more than 15 percentage points compared to just one to two years ago. The numbers climb higher in secondary education, with progressively more middle and high school teachers integrating AI into daily instruction.

Yet this rapid adoption reveals a troubling gap. While 60% of teachers reported using AI technologies for work purposes in a 2025 Twinkl survey of 6,500 educators, 76% acknowledged receiving no formal training. The disconnect between usage and preparation creates what some describe as educational whiplash—teachers and students racing ahead while institutional support lags behind.

The data becomes more nuanced when examined closely. According to research from Third Space Learning, only 23.3% of sixth-grade teachers and elementary administrators have used AI for math test preparation, primarily relying on ChatGPT or specialized tools like Teachmate. The variation suggests that how AI is used in USA schools depends heavily on subject area, grade level, and individual teacher initiative rather than systematic implementation.

Federal Push: Executive Orders Meet Classroom Reality

President Trump’s April 2025 Executive Order, “Advancing Artificial Intelligence Education for American Youth,” set the stage for sweeping changes. U.S. Secretary of Education Linda McMahon emphasized the administration’s vision: “Artificial intelligence has the potential to revolutionize education and support improved outcomes for learners. It drives personalized learning, sharpens critical thinking, and prepares students with problem-solving skills vital for tomorrow’s challenges.”

The Department of Education’s subsequent guidance outlined three key priorities: expanding AI and computer science education in K-12 schools, supporting professional development for educators on teaching AI fundamentals, and using AI to personalize learning through differentiated instruction. The guidance affirms that such uses are allowable under existing federal education programs, provided they align with statutory and regulatory requirements.

This federal framework aims to address what Catherine Truitt, former North Carolina superintendent of public instruction, identified as a critical gap: only 26 states had issued AI guidance as of spring 2025. Without state-level direction, schools often resort to blanket bans—creating what Truitt calls an equity issue that denies students access to tools reshaping the broader economy.

OpenAI’s Classroom Gambit: ChatGPT for Teachers

In November 2025, OpenAI launched ChatGPT for Teachers, offering verified U.S. K-12 educators free access through June 2027 to a workspace specifically designed for educational use. The platform includes unlimited access to GPT-5.1, integration with Google Drive and Microsoft 365, collaboration tools, and critically—education-grade security meeting FERPA requirements.

The announcement came with impressive credentials. OpenAI partnered with 16 school districts representing approximately 150,000 educators, including major systems like Houston ISD, Dallas ISD, and Prince William County Schools in Virginia. Leah Belsky, OpenAI’s vice president of education, framed the initiative clearly: “Our objective here is to make sure that teachers have access to AI tools as well as a teacher-focused experience so they can truly guide AI use.”

For Dr. LaTanya McDade, superintendent of Prince William County Schools, the partnership represents more than technology access. “ChatGPT for Teachers will go a long way in supporting our teachers and building teacher self-efficacy and agency in the work they do,” she explained, positioning the tool as foundational to the district’s innovation-driven strategic plan.

OpenAI isn’t alone in this space. Companies like Brisk Teaching, MagicSchool AI, and SchoolAI have provided teacher-specific generative AI tools with K-12 privacy protections for over two years. The competitive landscape reflects growing recognition that education represents a massive, largely untapped market for AI applications.

How Students Actually Use AI: Beyond the Cheating Narrative

The widespread assumption that students primarily use AI for cheating doesn’t align with emerging data. Research highlighted in the Teen and Young Adult Perspectives on Generative AI report shows the most common uses are gathering information (53%) and brainstorming ideas (51%). As one student noted, “Not all kids use it to cheat in school.”

Among 14 to 22-year-olds—the demographic with highest AI adoption—51% have used generative AI for schoolwork. Usage breaks down across creative and practical applications: 31% create images, 16% use AI for sound generation, and 15% employ it for writing code. The data suggests students view AI as a multipurpose learning tool rather than simply a shortcut.

This shift in perception matters. According to Cengage Group’s 2025 AI in Education report, 65% of higher education students believe AI tools are essential for success. Furthermore, 65% of students believe they know more about AI than their instructors, and 45% wish professors would actively teach AI skills in relevant courses.

Yet anxiety persists. Half of students reported worrying about false accusations of AI-assisted cheating. This fear has created what some describe as a “police state of writing,” where even students not using AI spend extra time editing work to sound “more human.” Jenny Maxwell, Grammarly’s Head of Education, noted that over 500,000 people—mostly students—use Grammarly’s AI and plagiarism detection tool weekly, highlighting the pervasive concern.

Practical Applications: From Robotics to Personalized Learning

In Passaic, New Jersey’s Dr. Martin Luther King Jr. School No. 6, fourth-graders in Selver Perez’s computer applications class work with AI tools to program robots, exploring concepts like self-driving cars and voice-activated assistants. The district exemplifies proactive AI literacy education, moving beyond abstract discussion to hands-on engagement.

The applications extend across subject areas. Teachers use ChatGPT to adapt materials for different reading levels, create differentiated lesson plans, and generate assessment questions. According to educators surveyed by Third Space Learning, teachers particularly value AI’s ability to adjust language complexity, making advanced concepts accessible to younger students.

On the administrative side, AI automates routine tasks like attendance tracking, scheduling, and grading. This automation promises to return time to teachers—a resource more precious than budget dollars in most schools. Early adopters report saving hours weekly, though quantifying these gains remains challenging.

Stanford University’s research through the Generative AI for Education Hub aims to fill knowledge gaps about efficacy. Professor Susanna Loeb, SCALE’s faculty director, emphasized the urgency: “AI tools are flooding K–12 classrooms—some offer real promise, others raise serious concerns—but few have been evaluated in any meaningful way. Education leaders are being asked to make consequential decisions in a data vacuum.”

The broader AI trends in USA 2025 show similar patterns of rapid adoption outpacing systematic evaluation, with enterprises and schools alike navigating uncharted territory as autonomous systems reshape traditional workflows.

The Training Gap: Teachers Want Support, Not Mandates

Victor Lee, faculty lead for Stanford’s AI + Education program, surveyed teachers on professional development needs related to AI. The findings were clear: educators want to understand how to use AI to teach, how to teach about AI, and how AI actually works. With California and other states mandating AI literacy in curriculum, Lee stressed the need for common frameworks and language.

The challenge appears daunting. RAND data shows that as of spring 2025, only 35% of district leaders provide students with training on AI use. Over 80% of students reported that teachers never explicitly taught them how to use AI for schoolwork. Meanwhile, only 26% of districts planned AI training during the 2024-2025 school year, though 74% committed to teacher training by fall 2025.

The gap creates professional stress. According to Cengage’s research, 82% of higher education instructors cite academic integrity as their top AI concern, followed by worries about bias, accuracy, and lack of training. Teachers find themselves caught between students’ fluency with AI tools and their own limited preparation for guiding that use.

Promising initiatives are emerging. Qualcomm partnered with AI for Education to provide AI literacy training for Thinkabit Lab instructors nationwide, working toward a goal of reaching one million educators. Code.org expanded its AI Foundations Course for high school students with Qualcomm support. The FIRST Robotics program will introduce an AI-compatible vision system for the 2025-26 season, giving high school students hands-on experience with AI-accelerated chips.

Policy Patchwork: States Navigate Uncharted Territory

As of April 2025, only Mississippi had enacted AI-related education legislation—Senate Bill 2426 creating a state AI task force. Yet 20 states introduced AI education bills in 2025, with Alabama, Hawaii, and Maryland advancing proposals through at least one legislative chamber.

The pattern shows evolution from early experimentation toward structured oversight. California’s Assembly Bill 1064, Connecticut’s Senate Bill 2, and Texas’s House Bill 1709 propose oversight boards and “regulatory sandboxes”—controlled environments for testing AI tools before widespread deployment. The approach balances innovation with accountability, following recommendations from the Southern Regional Education Board’s Commission on AI in Education.

State task force reports reveal common priorities. Arkansas’s 2025 report, Georgia’s 2024 analysis, and Illinois’s findings emphasize creating curricular frameworks for AI literacy, investing in educator professional development, ensuring equitable access across socioeconomic divides, and establishing comprehensive risk-management policies.

New York City Public Schools, the nation’s largest district, took a proactive approach. Director of Digital Learning Tara Carrozza described leading professional development for 10,000 staff members, creating a K-12 AI Policy Lab, and building partnerships with research institutions and technology companies. Similarly, Peninsula School District in Washington established an AI action research team and university partnerships to systematically explore implementation.

The Equity Question: Who Benefits, Who Falls Behind

Concerns about educational equity permeate AI discussions. Sixty-one percent of parents, 48% of middle schoolers, and 55% of high schoolers agree that greater AI use will harm students’ critical thinking skills. Only 22% of district leaders shared this concern, suggesting a disconnect between frontline stakeholders and educational leadership.

The digital divide threatens to widen. Most AI platforms offer free versions with limited capabilities alongside paid subscriptions with advanced features. In a system already grappling with resource inequality, any advantage some students gain through premium AI access compounds existing disparities.

Geographic and demographic patterns complicate the picture further. Urban teachers, who represent 68% of the teaching force without AI training, face different challenges than suburban or rural counterparts. The Information sector shows 25% AI adoption among schools—roughly ten times the rate for districts serving communities dependent on accommodation and food services.

Research from Macquarie University in Australia offers encouraging data: students using AI-powered chatbots improved examination results by up to 10% as of March 2025. Whether such gains distribute equitably across different student populations remains an open question requiring rigorous study.

Real-World Impact: Data Points From the Field

The statistics paint a complex picture. According to comprehensive surveys aggregating global data:

Student usage reached 86% globally for studies as of 2025, with 51% of U.S. students using generative AI. Among university students, usage exploded from 66% in 2024 to 92% in 2025. Specifically for assessments, 88% now use generative AI, up from 53% one year earlier.

ChatGPT dominates the landscape—66% of students use it for educational purposes, while Grammarly captures 25% usage. Students average 2.1 AI tools across their courses, suggesting most haven’t settled on a single preferred platform.

The age correlation proves strong. Among 14 to 22-year-olds, 51% use generative AI, though only 4% qualify as daily users. LGBTQ+ teens show 28% adoption, compared to 17% among cisgender or straight young people—a difference researchers attribute to both opportunity and potential negative impacts.

On the teaching side, generative AI tool usage increased 32% between the 2022-2023 and 2023-2024 school years. Currently, 83% of K-12 teachers use generative AI for either personal purposes or school-related activities. However, 32% express mixed feelings about benefits and drawbacks, while 35% remain uncertain about advantages and disadvantages.

The Training Imperative: Building AI Literacy

Tennessee educators offer insight into perceived future value: 60% believe AI skills will benefit students, and 69% think these skills will lead to high-paying jobs. From grade six through university, 59% of teachers expect students to possess basic AI skills—a significant shift in baseline competency expectations.

Major organizations committed substantial resources following the federal push. Houghton Mifflin Harcourt announced in June 2025 a suite of embedded AI supports including lesson plan generators, text translators, and vocabulary scaffolding. These tools integrate directly into HMH’s platform, creating what the company describes as a “tested and trusted one-stop shop” for high-quality instructional materials with AI assistance.

The American Federation of Teachers partnered with OpenAI to train more than 400,000 K-12 educators on practical AI skills, focusing on teacher-led innovation rather than top-down mandates. The collaboration reflects growing recognition that effective AI integration requires educator buy-in and expertise, not just access to tools.

For students, hands-on experience matters most. Programs like the AI-compatible vision system for FIRST Robotics, Qualcomm’s partnership with Experiential Robotics on AI education kits, and Code.org’s AI Foundations Course aim to demystify technology through practical application rather than abstract instruction.

Looking Ahead: Challenges and Opportunities

Stanford’s AI+Education Summit in March 2025 brought together researchers, educators, tech developers, and policymakers to address critical questions. Dan Schwartz, dean of the Stanford Graduate School of Education, shared his vision for AI-infused learning ecosystems emphasizing human-centered design and ethical deployment.

The path forward requires balancing competing priorities. Schools must protect student data while enabling innovative uses of AI. They need to prepare students for an AI-powered workforce while preserving critical thinking skills. Districts must provide equitable access without creating new forms of inequality.

Research will prove essential. Stanford’s partnership with OpenAI to study ChatGPT’s impact on learning outcomes represents the kind of rigorous evaluation needed. The project will examine how students and teachers use the platform, whether it affects knowledge retention and engagement, and how features like “study mode” influence deeper learning outcomes including self-regulation and metacognition.

Professor Loeb framed the stakes clearly: “AI holds enormous potential for education, but without research to understand what truly works, we risk locking in the flaws of our current system—or worse, creating new problems we never intended.”

The Bottom Line

How AI is used in USA schools in 2025 reflects broader tensions in American education: innovation versus caution, equity versus excellence, teacher autonomy versus systematic guidance. The rapid adoption—with 54% of students and 53% of teachers now using AI—has outpaced policy development, training programs, and research validation.

Yet momentum continues building. Federal guidance provides framework. Major technology companies commit resources. Districts experiment with implementation. Teachers adapt practices. Students integrate AI into learning workflows.

The transformation extends beyond tools and platforms to fundamental questions about education’s purpose. If AI can generate essays, solve math problems, and create presentations, what should students learn? If algorithms can personalize instruction, what’s the teacher’s role? If technology promises efficiency, how do we preserve the human connections central to learning?

These questions don’t have simple answers. They require ongoing dialogue among educators, students, parents, policymakers, technologists, and researchers. The schools getting it right recognize AI not as a solution to be implemented but as a capability to be thoughtfully integrated—one that amplifies rather than replaces human teaching and learning.

As one fourth-grade teacher in Passaic, New Jersey put it, helping students understand AI tools means showing them “it’s not magic.” It’s technology created by people, deployed by institutions, and shaped by choices made in classrooms, district offices, and policy meetings across America. How those choices unfold will determine whether AI fulfills its promise to transform education for the better—or simply automates existing inequities at scale.

This article draws on research and reporting from the U.S. Department of Education, RAND Corporation, Stanford University’s SCALE Initiative, Education Week, the Digital Education Council, Cengage Group, and analysis from education technology researchers across the United States.